Quick Start

The Oxen.ai chat completions API is fully OpenAI-compatible. You can use the OpenAI SDK,curl, or any HTTP client that speaks the OpenAI chat format.

Base URL: https://hub.oxen.ai/api

Endpoint: POST /chat/completions

Browse all available models.

Authentication

Every request requires a Bearer token in theAuthorization header. You can find your API key in your account settings.

Response Format

The API returns an OpenAI-compatible JSON response:| Field | Description |

|---|---|

id | Unique identifier for the completion |

object | Always "chat.completion" |

created | Unix timestamp of when the completion was created |

model | The model that generated the response |

choices | Array of completion choices (typically one) |

choices[].message.content | The generated text |

choices[].finish_reason | Why generation stopped: "stop" (natural end) or "length" (hit max_tokens) |

usage | Token counts for the request |

Parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

model | string | required | Model name, e.g. "claude-sonnet-4-6", "gpt-4o", "gemini-3-flash-preview" |

messages | array | required | Array of message objects with role and content |

max_tokens | integer | model default | Maximum number of tokens to generate |

temperature | float | model default | Sampling temperature (0-2). Lower is more deterministic. |

stream | boolean | false | Enable streaming with server-sent events |

Messages

Each message in themessages array has a role and content:

| Role | Description |

|---|---|

system | Sets the behavior and context for the model |

user | The user’s input |

assistant | Previous model responses (for multi-turn conversations) |

Streaming

Set"stream": true to receive responses as server-sent events (SSE). Each event is a chat.completion.chunk object with a delta instead of a message.

data: and contains a JSON chunk:

Vision

Models that support vision (such asgpt-4o or claude-sonnet-4-6) accept images in the messages array. For full details and examples including base64 encoding and video understanding, see Vision Language Models.

Tool use

Tool calling (function calling) follows the same OpenAI Chat Completions tool format. You send atools array describing each function’s JSON Schema; the model may reply with tool_calls instead of plain text. You execute those functions in your app, then send the results back in new tool messages so the model can finish the answer.

| Concept | Description |

|---|---|

tools | Array of { "type": "function", "function": { "name", "description", "parameters" } } objects. parameters is a JSON Schema object for the arguments. |

tool_choice | Optional. "auto" (default) lets the model decide; "none" disables tools; or force a specific function with {"type": "function", "function": {"name": "..."}}. |

Assistant tool_calls | When finish_reason is "tool_calls", choices[0].message.tool_calls lists each call with id, function.name, and function.arguments (a JSON string). |

tool messages | Each result uses role: "tool", tool_call_id matching the call’s id, and content as a string (often JSON your tool returned). |

Raw curl: first request (tools only)

The model may respond with tool_calls instead of user-facing content:

tool_calls, and one tool message per call. Replace IDs and tool_calls with values from the first response. Repeat until finish_reason is "stop" (or "length") and there are no new tool_calls.

Follow-up request: curl and OpenAI Python SDK

The follow-up HTTP body matches what the OpenAI SDK builds when you append assistant and tool messages in a loop.

Errors

The API returns errors as JSON with anerror object and a standard HTTP status code.

| Status | Meaning |

|---|---|

400 | Bad request (missing model, empty messages, invalid parameters) |

401 | Invalid or missing API key |

429 | Rate limit exceeded |

500 | Internal server error |

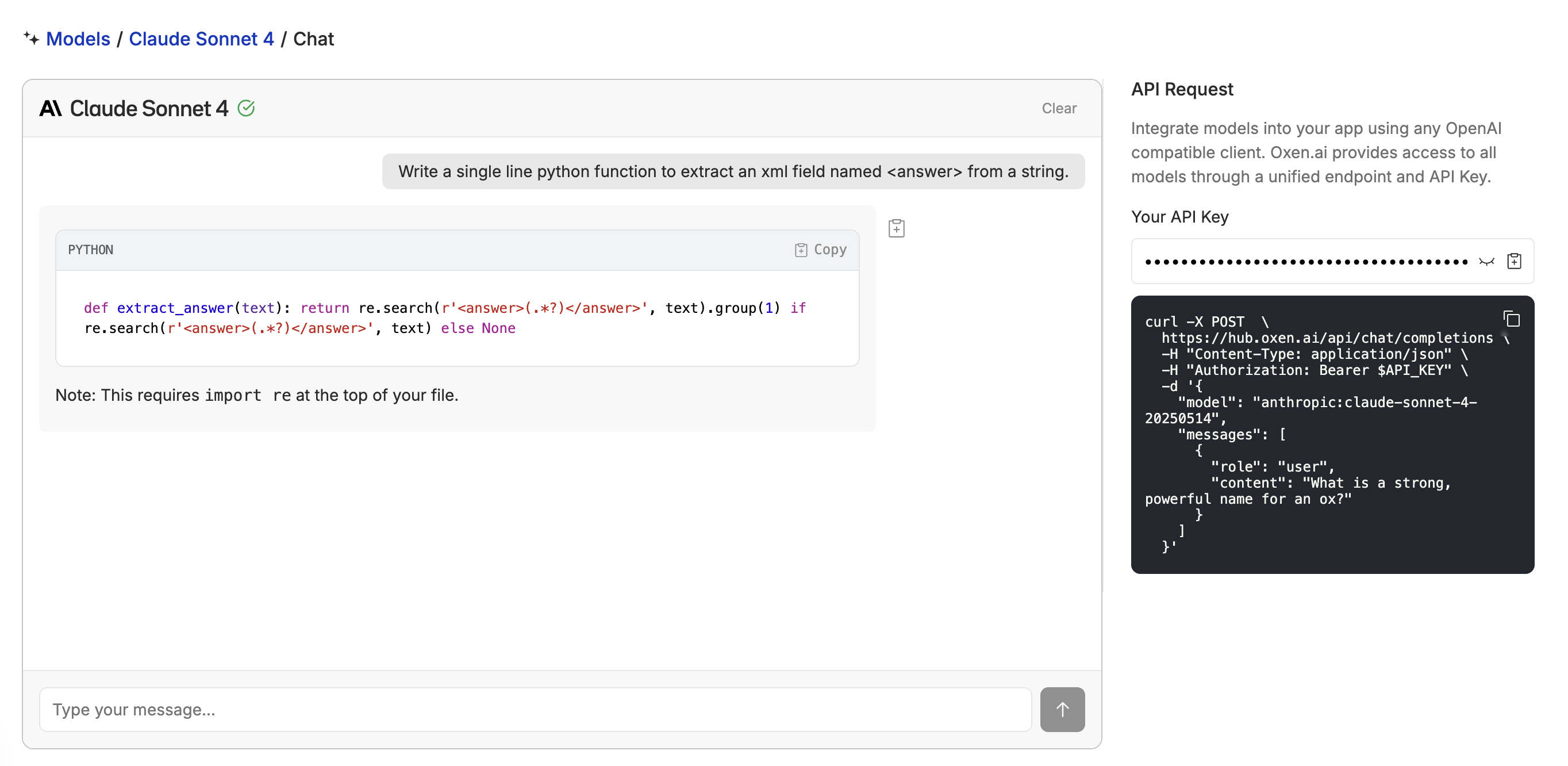

Playground

The model playground lets you test any model interactively before writing code. This is also a great way to test models you’ve fine-tuned after deploying them.